Disasters such as earthquakes and conflicts can cause sudden changes in nighttime light. Monitoring these changes is important for understanding disaster impacts and supporting recovery efforts. High-resolution nighttime light (NTL) remote sensing provides detailed observations of human activities and infrastructure conditions at night, making it a valuable data source for disaster assessment. However, NTL images acquired by different satellites often have inconsistent radiometric characteristics, which makes their brightness values difficult to compare directly. Radiometric intercalibration is therefore required to reduce these sensor differences and enable the integration of multi-source NTL datasets.

The recommended practice introduces an automatic radiometric intercalibration workflow for high-resolution nighttime light imagery using SDGSAT-1 and Yangwang-1 data. The procedure identifies stable pixels, estimates the regression relationship between sensors, and applies the derived transformation to generate radiometrically consistent images. The workflow is implemented in Python and provided through an open-source GitHub repository, enabling users to integrate multi-source nighttime light datasets and improve disaster monitoring capabilities.

Background

Disasters such as earthquakes, conflicts can cause sudden changes in nighttime light. These changes can be observed by satellites and analyzed using nighttime light (NTL) remote sensing data. High-resolution NTL imagery provides detailed information about human activities and infrastructure conditions at night, making it useful for disaster assessment and recovery monitoring.

To improve monitoring frequency, researchers often combine NTL images from different satellites. However, images acquired by different sensors may have different radiometric characteristics. These differences make the brightness values difficult to compare directly. Traditional radiometric intercalibration methods usually rely on pseudo-invariant pixels (PIPs), which are areas assumed to remain stable over time. In disaster situations, however, stable reference areas can be difficult to identify because large regions may experience significant changes in nighttime illumination.

In this procedure, an automatic radiometric intercalibration approach is introduced to improve the comparability of high-resolution NTL images from different sensors. The workflow is implemented using Python, and the processing scripts are provided through a public GitHub repository. This approach helps integrate multi-source nighttime light datasets and supports more frequent observations for disaster monitoring.

Radiometric Intercalibration Principle

Radiometric intercalibration is a process used to make satellite images from different sensors comparable. Because different satellites may have different sensor designs, spectral responses, and imaging conditions, the brightness values recorded by each sensor may not be directly consistent. Radiometric intercalibration adjusts these differences so that images from different sensors can be analyzed together.

A common approach for radiometric intercalibration is to first identify stable regions that remain relatively unchanged over time. These areas are often referred to as pseudo-invariant regions or pixels. The brightness values from the stable regions in the two images are then used to establish a regression relationship between the sensors. Once the relationship is obtained, it can be applied to transform the image from one sensor into a radiometrically consistent image with the reference sensor.

Radiometric Intercalibration Workflow

Remote sensing images acquired from different satellites may show differences in brightness values because of sensor characteristics and imaging conditions. To make multi-source nighttime light (NTL) images comparable, a radiometric intercalibration process is required. In this practice, SDGSAT-1 and Yangwang-1 images are used as an example to demonstrate the intercalibration workflow.

The procedure consists of three main steps: identifying stable pixels, estimating the regression relationship between sensors, and applying the derived transformation to perform radiometric correction.

1. Identification of Stable Pixels

The first step of radiometric intercalibration is to identify stable pixels that show little change in nighttime light intensity. These pixels are often referred to as pseudo-invariant pixels (PIPs).

Because disasters may cause large variations in nighttime light intensity, not all pixels can be used for model fitting. Therefore, only pixels with relatively stable brightness values between the two images are selected as training samples.

To identify candidate pixels, threshold segmentation is applied to both SDGSAT-1 and Yangwang-1 images to determine lit areas. Only pixels that belong to the common lit area in both images are retained for further analysis.

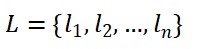

Let the selected stable pixels from Yangwang-1 be

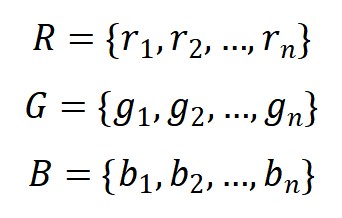

and the corresponding RGB values from SDGSAT-1 be

where n denotes the number of selected stable pixels.

2. Regression-Based Sensor Relationship Estimation

After selecting stable pixels, a regression model is used to establish the relationship between the two sensors.

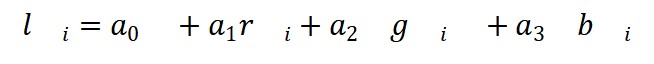

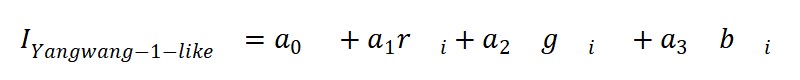

In this practice, the RGB bands of SDGSAT-1 brightness value is modeled as a linear combination of Yangwang-1. The regression model can be written as

where

l i is the brightness value of the i-th Yangwang-1 pixel

r i , g i , b i are the RGB values of the corresponding SDGSAT-1 pixel

α0, α1, α2, α3 are regression coefficients

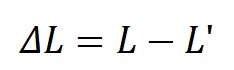

To improve robustness, an iterative regression process is used to remove outliers. The difference between observed and predicted values is calculated as

where L' denotes the predicted Yangwang-1 brightness values obtained from the regression model.

Pixels with large residual errors are removed from the training dataset, and the regression model is recalculated until the model converges.

3. Radiometric Intercalibration

Once the regression relationship between the two sensors has been obtained, it can be applied to all pixels of the SDGSAT-1 image.

The transformed SDGSAT-1 brightness value can be calculated as

where IYangwang-1-like represents the Yangwang-1-like image generated from SDGSAT-1 data.

After this transformation, the resulting image has brightness values that are consistent with Yangwang-1 observations, allowing the two datasets to be used together for further analysis.

This practice can be applied to disaster events anywhere in the world.

Advantages

- Robustness and Flexibility: The workflow can be applied to different regions and disaster cases. Yangwang-1 is used as a case example, while the method can be extended to intercalibrate other high-resolution nighttime light datasets.

- Consistency: The regression model converts SDGSAT-1 RGB imagery into Yangwang-1-like images, ensuring similar radiometric scales and spatial patterns so that multi-sensor nighttime light datasets can be directly compared.

- Temporal coverage: By integrating multi-source nighttime light imagery, the method improves observation frequency and enables monitoring of dynamic changes in human activities after disasters.

- Reproducibility: The workflow is computationally efficient and implemented in Python, with open-source scripts available on GitHub so users can reproduce the process and adapt it to other datasets.

Disadvantages

- Data availability: The method requires high-resolution nighttime light datasets from multiple sensors. In regions where such data are unavailable or limited, the intercalibration workflow may be difficult to apply.

- Stable pixels: The approach relies on the presence of sufficient pseudo-invariant pixels. In areas with rapid nighttime light changes, such as large disasters or fast urbanization, identifying stable pixels may be challenging.

GitHub repository

https://github.com/UN-SPIDER-Wuhan/ntl_rad_intercalibration.git

●Yin, Z., Li, X., Tong, F., Li, Z., & Jendryke, M. (2020). Mapping urban expansion using night-time light images from Luojia1-01 and International Space Station. International Journal of Remote Sensing, 41, 2603-2623.